Skip to end of metadataGo to start of metadata

BitNami Apache Solr provides a one-click install solution for Apache Solr. Download installers and virtual machines or run your own Apache Solr server in the cloud. Updating homebrew did not do the trick for me. Homebrew updated its Solr formula to Solr 4.9 but the URL is no longer valid. I fixed this issue by editing the Solr formula. Apache Solr is a subproject of Apache Lucene, which is the indexing technology behind most recently created search and index technology. Solr is a search engine at heart, but it is much more than. Sep 26, 2018.

You are viewing an old version of this page. View the current version.

Nutch is a well matured, production ready Web crawler. Nutch 1.x enables fine grained configuration, relying on Apache Hadoop data structures, which are great for batch processing. Being pluggable and modular of course has it's benefits, Nutch provides extensible interfaces such as Parse, Index and ScoringFilter's for custom implementations e.g. Apache Tika for parsing. Additonally, pluggable indexing exists for Apache Solr, Elastic Search, SolrCloud, etc. We can find Web page hyperlinks in an automated manner, reduce lots of maintenance work, for example checking broken links, and create a copy of all the visited pages for searching over. This tutorial explains how to use Nutch with Apache Solr. Solr is an open source full text search framework, with Solr we can search pages acquired by Nutch. Apache Nutch supports Solr out-the-box, simplifying Nutch-Solr integration. It also removes the legacy dependence upon both Apache Tomcat for running the old Nutch Web Application and upon Apache Lucene for indexing. Just download a binary release from here.

By the end of this tutorial you will

- Have a configured local Nutch crawler setup to crawl on one machine

- Learned how to understand and configure Nutch runtime configuration including seed URL lists, URLFilters, etc.

- Have executed a Nutch crawl cycle and viewed the results of the Crawl Database

- Indexed Nutch crawl records into Apache Solr for full text search

Any issues with this tutorial should be reported to the Nutch user@ list.

This tutorial describes the installation and use of Nutch 1.x (e.g. release cut from the master branch). For a similar Nutch 2.x with HBase tutorial, see Nutch2Tutorial.

- Unix environment, or Windows-Cygwin environment

- Java Runtime/Development Environment (JDK 1.8 / Java 8)

- (Source build only) Apache Ant: https://ant.apache.org/

Option 1: Setup Nutch from a binary distribution

- Download a binary package (

apache-nutch-1.X-bin.zip) from here. - Unzip your binary Nutch package. There should be a folder

apache-nutch-1.X. cd apache-nutch-1.X/

From now on, we are going to use${NUTCH_RUNTIME_HOME} to refer to the current directory (apache-nutch-1.X/).

Option 2: Set up Nutch from a source distribution

Advanced users may also use the source distribution:

- Download a source package (

apache-nutch-1.X-src.zip) - Unzip

cd apache-nutch-1.X/- Run

antin this folder (cf. RunNutchInEclipse) - Now there is a directory

runtime/localwhich contains a ready to use Nutch installation.

When the source distribution is used${NUTCH_RUNTIME_HOME} refers toapache-nutch-1.X/runtime/local/. Note that - config files should be modified in

apache-nutch-1.X/runtime/local/conf/ ant cleanwill remove this directory (keep copies of modified config files)

- run '

bin/nutch' - You can confirm a correct installation if you see something similar to the following:

Some troubleshooting tips:

- Run the following command if you are seeing 'Permission denied':

- Setup

JAVA_HOMEif you are seeingJAVA_HOMEnot set. On Mac, you can run the following command or add it to~/.bashrc:

On Debian or Ubuntu, you can run the following command or add it to ~/.bashrc:

You may also have to update your /etc/hosts file. If so you can add the following

Note that the

LMC-032857 above should be replaced with your machine name.Nutch requires two configuration changes before a website can be crawled:

- Customize your crawl properties, where at a minimum, you provide a name for your crawler for external servers to recognize

- Set a seed list of URLs to crawl

Customize your crawl properties

- Default crawl properties can be viewed and edited within {{conf/nutch-default.xml }}- where most of these can be used without modification

- The file

conf/nutch-site.xmlserves as a place to add your own custom crawl properties that overwriteconf/nutch-default.xml. The only required modification for this file is to override thevaluefield of the {{http.agent.name }}- i.e. Add your agent name in the

valuefield of thehttp.agent.nameproperty inconf/nutch-site.xml, for example:

- i.e. Add your agent name in the

- ensure that the

plugin.includesproperty withinconf/nutch-site.xmlincludes the indexer asindexer-solr

Create a URL seed list

- A URL seed list includes a list of websites, one-per-line, which nutch will look to crawl

- The file

conf/regex-urlfilter.txtwill provide Regular Expressions that allow nutch to filter and narrow the types of web resources to crawl and download

Create a URL seed list

mkdir -p urlscd urlstouch seed.txtto create a text fileseed.txtunderurls/with the following content (one URL per line for each site you want Nutch to crawl).

(Optional) Configure Regular Expression Filters

Edit the file

conf/regex-urlfilter.txt and replacewith a regular expression matching the domain you wish to crawl. For example, if you wished to limit the crawl to the

nutch.apache.org domain, the line should read:This will include any URL in the domain

nutch.apache.org.NOTE: Not specifying any domains to include within regex-urlfilter.txt will lead to all domains linking to your seed URLs file being crawled as well.

Using Individual Commands for Whole-Web Crawling

NOTE: If you previously modified the file

conf/regex-urlfilter.txt as covered here you will need to change it back.Whole-Web crawling is designed to handle very large crawls which may take weeks to complete, running on multiple machines. This also permits more control over the crawl process, and incremental crawling. It is important to note that whole Web crawling does not necessarily mean crawling the entire World Wide Web. Download aircrack ng for android. We can limit a whole Web crawl to just a list of the URLs we want to crawl. This is done by using a filter just like the one we used when we did the

crawl command (above).Step-by-Step: Concepts

Nutch data is composed of:

- The crawl database, or crawldb. This contains information about every URL known to Nutch, including whether it was fetched, and, if so, when.

- The link database, or linkdb. This contains the list of known links to each URL, including both the source URL and anchor text of the link.

- A set of segments. Each segment is a set of URLs that are fetched as a unit. Segments are directories with the following subdirectories:

- a crawl_generate names a set of URLs to be fetched

- a crawl_fetch contains the status of fetching each URL

- a content contains the raw content retrieved from each URL

- a parse_text contains the parsed text of each URL

- a parse_data contains outlinks and metadata parsed from each URL

- a crawl_parse contains the outlink URLs, used to update the crawldb

Step-by-Step: Seeding the crawldb with a list of URLs

Bootstrapping from an initial seed list.

This option shadows the creation of the seed list as covered here.

Bootstrapping from DMOZ

Note: DMOZ closed in 2017. The steps below do not work, you need to get DMOZ's content.rdf.u8.gz from elsewhere.

The injector adds URLs to the crawldb. Let's inject URLs from the DMOZ Open Directory. First we must download and uncompress the file listing all of the DMOZ pages. (This is a 200+ MB file, so this will take a few minutes.)

Next we select a random subset of these pages. (We use a random subset so that everyone who runs this tutorial doesn't hammer the same sites.) DMOZ contains around three million URLs. We select one out of every 5,000, so that we end up with around 1,000 URLs:

The parser also takes a few minutes, as it must parse the full file. Finally, we initialize the crawldb with the selected URLs.

Now we have a Web database with around 1,000 as-yet unfetched URLs in it.

Step-by-Step: Fetching

To fetch, we first generate a fetch list from the database:

This generates a fetch list for all of the pages due to be fetched. The fetch list is placed in a newly created segment directory. The segment directory is named by the time it's created. We save the name of this segment in the shell variable

s1:Now we run the fetcher on this segment with:

Then we parse the entries:

When this is complete, we update the database with the results of the fetch:

Now the database contains both updated entries for all initial pages as well as new entries that correspond to newly discovered pages linked from the initial set.

Now we generate and fetch a new segment containing the top-scoring 1,000 pages:

Let's fetch one more round:

By this point we've fetched a few thousand pages. Let's invert links and index them!

Step-by-Step: Invertlinks

Apache Solr Drupal

Before indexing we first invert all of the links, so that we may index incoming anchor text with the pages. Iso burn pro mac download. Free online diary download mac.

We are now ready to search with Apache Solr.

Step-by-Step: Indexing into Apache Solr

Note: For this step you should have Solr installation. If you didn't integrate Nutch with Solr. You should read here.

Now we are ready to go on and index all the resources. For more information see the command line options.

Step-by-Step: Deleting Duplicates

Duplicates (identical content but different URL) are optionally marked in the CrawlDb and are deleted later in the Solr index.

MapReduce 'dedup' job:

- Map: Identity map where keys are digests and values are CrawlDatum records

- Reduce: CrawlDatums with the same digest are marked (except one of them) as duplicates. There are multiple heuristics available to choose the item which is not marked as duplicate - the one with the shortest URL, fetched most recently, or with the highest score.

Apache Lucene Solr

Deletion in the index is performed by the cleaning job (see below) or if the index job is called with the command-line flag

-deleteGone.For more information see dedup documentation.

Step-by-Step: Cleaning Solr

The class scans a crawldb directory looking for entries with status DB_GONE (404), duplicates or optionally redirects and sends delete requests to Solr for those documents. Once Solr receives the request the aforementioned documents are duly deleted. This maintains a healthier quality of Solr index.

For more information see clean documentation.

Using the crawl script

If you have followed the section above on how the crawling can be done step by step, you might be wondering how a bash script can be written to automate all the process described above.

Nutch developers have written one for you , and it is available at bin/crawl. Here the most common options and parameters:

The crawl script has lot of parameters set, and you can modify the parameters to your needs. It would be ideal to understand the parameters before setting up big crawls.

Every version of Nutch is built against a specific Solr version, but you may also try a 'close' version.

Nutch | Solr |

| 1.17 | 8.5.1 |

| 1.16 | 7.3.1 |

1.15 | 7.3.1 |

1.14 | 6.6.0 |

1.13 | 5.5.0 |

1.12 | 5.4.1 |

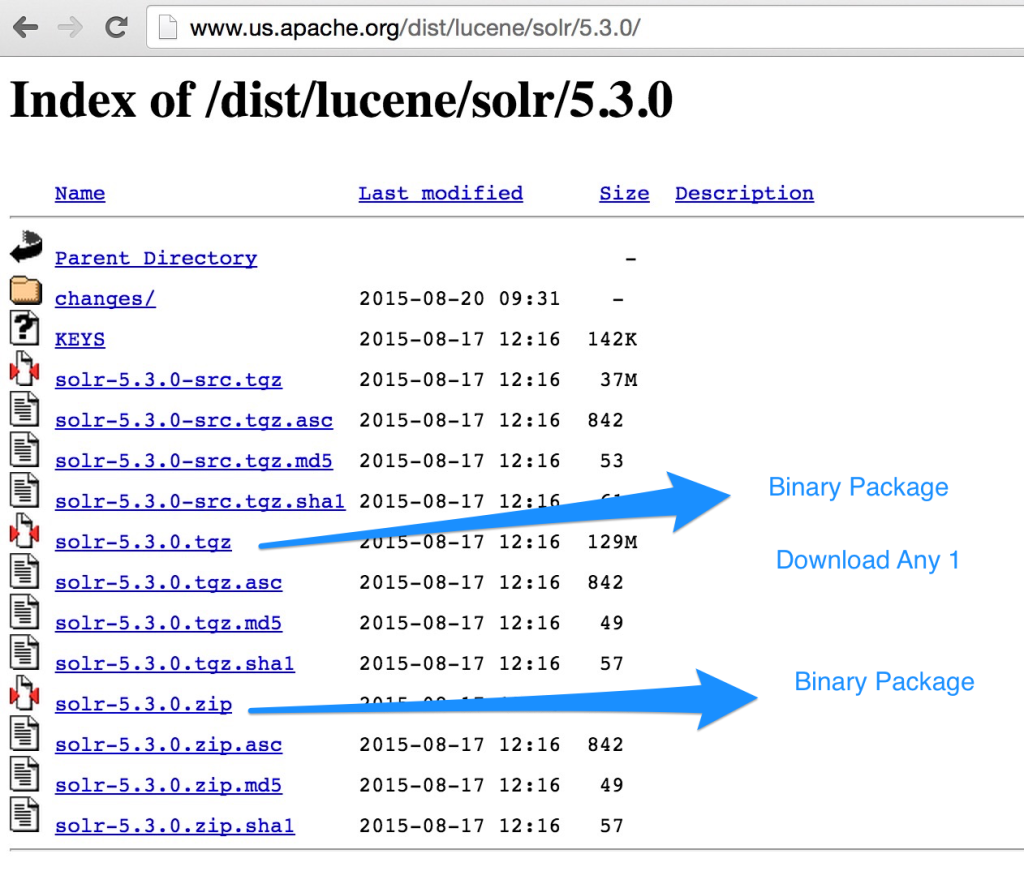

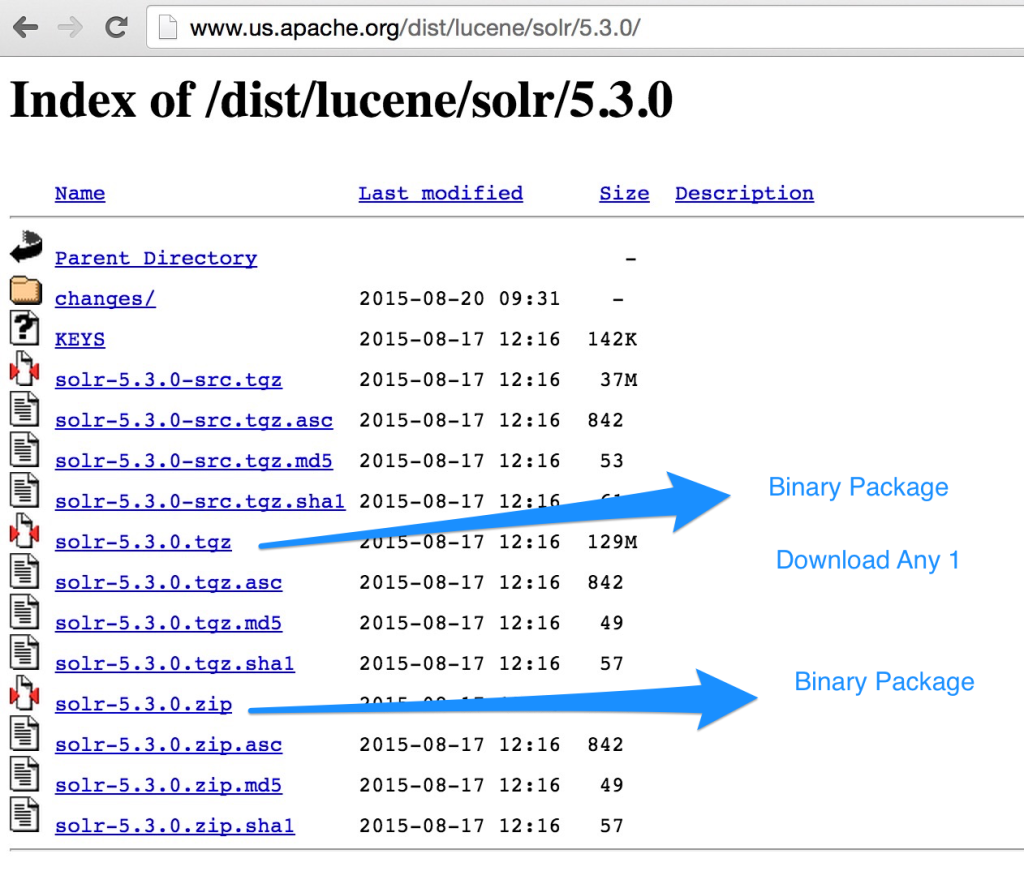

To install Solr 7.x (or upwards):

- download binary file from here

- unzip to

$HOME/apache-solr, we will now refer to this as${APACHE_SOLR_HOME} - create resources for a new 'nutch' Solr core

- copy the Nutch's schema.xml into the Solr

confdirectory- (Nutch 1.15 or prior) copy the schema.xml from the conf/ directory: https://renewseattle748.weebly.com/cant-download-windows-support-mac.html.

- (Nutch 1.16) copy the schema.xml from the indexer-solr source folder (source package):Note: due to NUTCH-2745 the schema.xml is not contained in the binary package. Please download the schema.xml from the source repository.

- You may also try to use the most recent schema.xml in case of issues launching Solr with this schema.

- make sure that there is no managed-schema 'in the way':

- start the solr server

- create the nutch core

After that you need to point Nutch to the Solr instance:

- (Nutch 1.15 and later) edit the file

conf/index-writers.xml, see IndexWriters - (until Nutch 1.14) add the core name to the Solr server URL:

-Dsolr.server.url=http://localhost:8983/solr/nutch

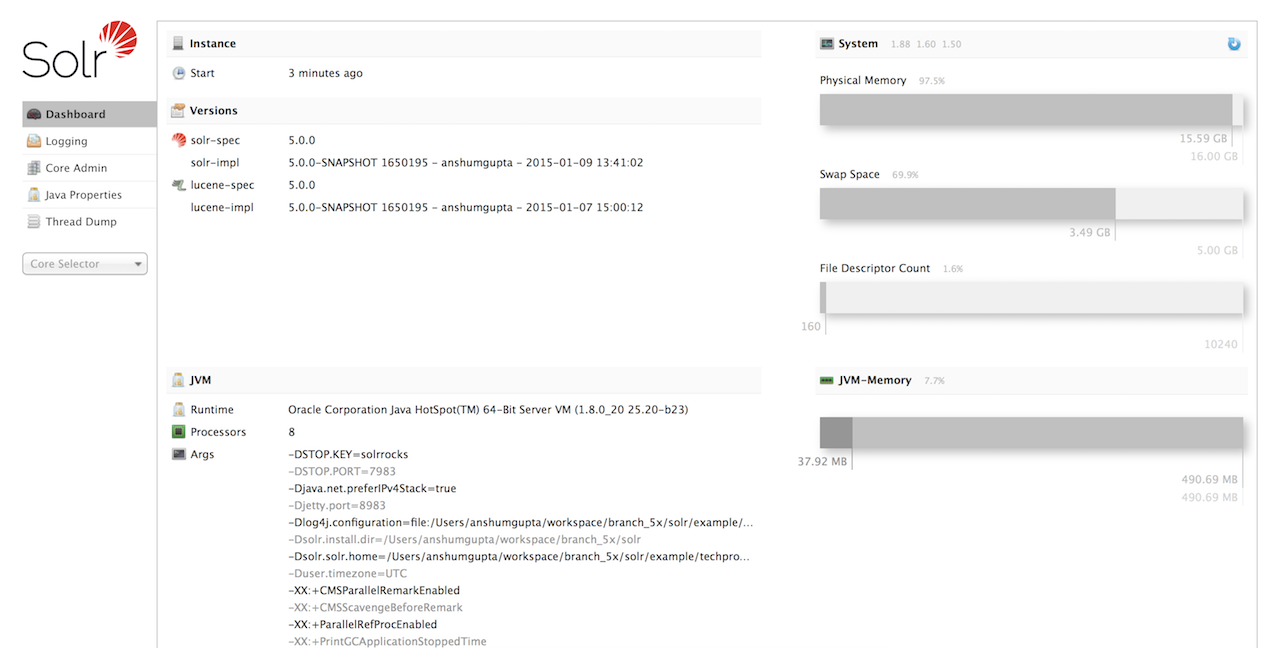

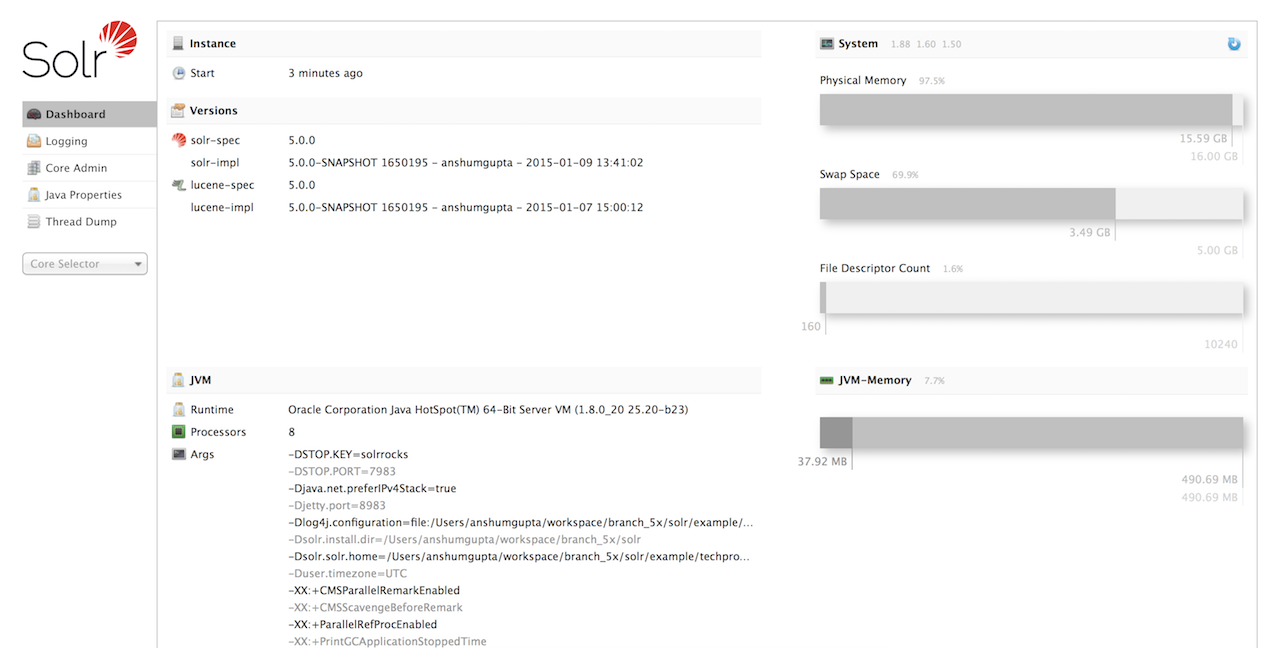

After you started Solr admin console, you should be able to access the following links:

Apache Solr Database

You should be able to navigate to the

nutch core and view the managed-schema, etc.Apache Solr Azure

You may want to check out the documentation for the Nutch 1.X REST API to get an overview of the work going on towards providing Apache CXF based REST services for Nutch 1.X branch.